Documentation Index

Fetch the complete documentation index at: https://docs.sqd.dev/llms.txt

Use this file to discover all available pages before exploring further.

Intro

Blockchain, while initially designed solely for peer-to-peer transfers (Bitcoin), has since evolved into a general purpose global execution runtime. The introduction of smart contract blockchains (Ethereum being the first) has enabled the creation of decentralized Apps (dApps) that are now catering to users in countless verticals, including:- Decentralized Finance (DeFi) and tokenized real-world assets (RWA)

- Decentralized Social application (DeSoc)

- Decentralized Gaming with peer-to-peer game economies (GameFi)

- Decentralized physical infrastructure (DePIN)

- Decentralized creator economy (Music, Videos, NFTs)

- Scalable execution (L2 chains, Rollups, Bridges, Solana)

- Bringing off-chain and real-world data on-chain (Oracles like Chainlink)

- Permanent and long-term storage for large data (Arweave, Filecoin)

- Application-specific databases and APIs (TheGraph, POKT, Kwil, WeaveDB)

- Infinite horizontal scalability: the capacity of the lake should grow indefinitely as new nodes join

- Permissionless data access: the data can be uploaded and queried without any gatekeeping or centralized control

- Credible neutrality: no barrier for new nodes to join the network

- Trust-minimized queries: the data can be audited, and all clients can verify the query result

- Low maintenance cost: the price per query should be negligible

- The raw data is uploaded into permanent storage by the data providers, which act as oracles but for “big data”

- The data is compressed and distributed among the network nodes

- Node operators have to bond a security deposit, which can be slashed for byzantine behavior

- Each node efficiently queries the local data with DuckDB

- Any query can be verified by submitting a signed response to an on-chain smart contract

Design Overview

The below actors all participate in the SQD Network:- Data Providers

- Workers

- Scheduler

- Logs Collector

- Rewards manager

- Data Consumers

Data Providers

Data Providers produce data to be served by SQD Network. Currently, the focus is on on-chain data, and data providers are blockchains and L2s. At the moment, SQD only supports EVM- and Substrate chains, but we plan on adding support for Cosmos and Solana in the near future. Data providers are responsible for ensuring the quality and timely provision of data. During the bootstrapping phase, Subsquid Labs GmbH acts as the sole data provider for the SQD Network, serving as a proxy for chains from which the data is ingested block-by-block. The ingested data is validated by comparing hashes. It’s then split into small compressed chunks and saved into persistent storage, from which the chunks are randomly distributed between the workers.

Scheduler

The scheduler is responsible for distributing the data chunks submitted by the data providers among the workers. The scheduler listens to updates of the data sets, as well as updates of the worker sets, and sends requests to the workers to download new chunks and/or redistribute the existing data chunks based on the capacity and the target redundancy for each dataset. Once a worker receives an update request , it downloads the missing data chunks from the corresponding persistent storage.

Workers

Workers contribute the storage and compute resources to the network. They serve the data in a peer-to-peer manner for consumption and receive SQD tokens as compensation. Each worker has to be registered on-chain by bonding 100,000 SQD tokens, which can be slashed if the worker provably violates the protocol rule. SQD holders can also delegate to a specific worker to signal the reliability of the worker and earn a portion of the rewards. The rewards are distributed each epoch and depend on:- The previous number of epochs the worker stayed online

- The amount of data served to clients

- The number of delegated tokens

- Fairness

- Liveness during the epoch

Logs collector

The Logs Collector’s sole responsibility is to collect the liveness pings and the query execution logs from the workers, batch them, and save them into public persistent storage. The logs are signed by the workers’ P2P identities and pinned to IPFS. The data is stored for at least 6 months and used by other network participants.Reward Manager

The Reward Manager is responsible for calculating and submitting worker rewards on-chain for each epoch. The rewards depend on:- Worker liveness during the epoch

- Delegated tokens

- Served queries (in bytes; both scanned and returned sizes are accounted for)

- Liveness since the registration

Data Consumers

To query the network, data consumers have to operate a gateway or use an externally provided service (public or private). Each gateway is bound to an on-chain address. The number of requests a gateway can submit to the network is capped by a number calculated based on the amount of locked SQD tokens. Consequently, the more tokens are locked by the gateway operator, the more bandwidth it is allowed to consume. One can think of this mechanism as if the locked SQD yields virtual “compute units” (CU) based on the period the tokens are locked. All queries cost the same price of 1 CU (until complex SQL queries are implemented). The query cap is calculated by:- Calculating the virtual yield in SQD on the locked tokens (in SQD)

- Multiplying by the current CU price (in CU/SQD)

Boosters

The locking mechanism has an additional booster design to incentivize gateway operators to lock their tokens for longer periods of time for an increase in CU. The longer the lock period, the more CUs are allocated per SQD/yr. The APY is calculated asBASE_VIRTUAL_APY * BOOSTER.

BASE_VIRTUAL_APY = 12% and 1SQD = 4000 CU.

For example, if a gateway operator locks 100 SQD for 2 months, the virtual yield is 2SQD, which means it can perform 8000 queries (8000 CU).

If 100 SQD are locked for 3 years, the virtual yield is 12% * 3 APY, so the operator gets CUs worth 108 of SQD, that is it can submit up to 432000 queries to the network within the period of 3 years.

Query Validation

The SQD Network provides economic guarantees for the validity of the queried data, with the added possibility of validating specific queries on-chain. All query responses are signed by the worker who executed the query, acting as a commitment to the query response. Anyone can submit such a response on-chain, and if it is deemed incorrect, the worker bond is slashed. The smart contract validation logic may be dataset-specific depending on the nature of the data being queried, with the following options:- Proof by Authority: a white-listed set of on-chain identities decides on the validity of the response.

- Optimistic on-chain: after the validation request is submitted, anyone can submit a claim proving the query response is incorrect. For example, assuming the original query was “Return transactions matching the filter

Xin the block range[Y, Z]” and the response is some set of transactionsT.During the validation window, anyone can submit a Merkle proof for some transactiontmatching the filterXyet not inT.If no such proofs are submitted during the decision window, the response is considered valid. - Zero-Knowledge: a zero-knowledge proof that the response exactly matches the requests. The zero-knowledge proof is generated off-chain by a prover and is validated on-chain by the smart contract.

SQD Token

SQD is the ERC-20 protocol token that is native to the SQD Network ecosystem. The token smart contract is to be deployed on the Ethereum mainnet and bridged to Arbitrum One. This strategy seeks to ensure the blockchain serves as a reliable, censorship-resistant, and verifiably impartial ledger, facilitating reward settlements and managing access to network resources. The SQD token is a critical component of the SQD ecosystem. Use cases for the SQD token are focused on streamlining and securing network operations in a permissionless manner:- Alignment of incentives for infrastructure providers: SQD is used to reward node operators that contribute computation and storage resources to the network.

- Curation of network participants: Via delegation, the SQD token design includes built-in curation of nodes, facilitating permissionless selection of trustworthy operators for rewards.

- Fair resource consumption: By locking SQD tokens, consumers of data from the decentralized data lake may increase rate limits.

- Network decision making: SQD tokenholders can participate in governance, and are enabled to vote on protocol changes and other proposals.

Appendix I — Metadata

The metadata has the following structure:reserved_space. The dataset can be in the following states:

Scheduler changes the state to IN_PREPARATION and ACTIVE from SUBMITTED. The COOLDOWN and DISABLED states are activated automatically if subscription payments aren’t made.

At the initial stage of the network, the switch to disabling datasets is granted to Subsquid Labs GmbH, which is going to be replaced by auto payments at a later stage.

Appendix II — Rewards

The network rewards are paid out to workers and delegators for each epoch. The Reward Manager submits an on-chain claim commitment, from which each participant can claim. The rewards are allocated from the rewards pool. Each epoch, the rewards pool unlocksAPY_D * S * EPOCH_LENGTH in rewards, where EPOCH_LENGTH is the length of the epoch in days, S is the total (bonded + delegated) amount of staked SQD during the epoch and APY_D is the (variable) base reward rate calculated for the epoch.

Rewards pool

The SQD supply is fixed for the initial pool, and the rewards are distributed from a pool, to which 10% of the supply is allocated at TGE. The reward pool has a protective mechanism which caps the amount of rewards distributed per epoch, and it is subject to change via governance. During the initial 3-year bootstrapping phase, the reward cap and the total supply of SQD is fixed. Afterwards, the reward cap drops significantly until the governance motion concludes on the inflation schedule and new tokens are minted to replenish the reward pool. Unlike most projects who fix the inflation schedule before the launch, postponing this decision leaves a lot more flexibility and allows the community to analyze the historical 3-year data to make an informed decision on the future issuance of SQD.Reward rate

The reward rate depends on two factors: utilization of the network and staked supply. The network utilization rate is defined asd.

The actual capacity is calculated as

WORKER_CAPACITY is a fixed storage per worker, set to 1TB. CHURN is a discounting factor to account for the churn, set to 0.9.

The target APR (365-day-based rate) is then calculated as:

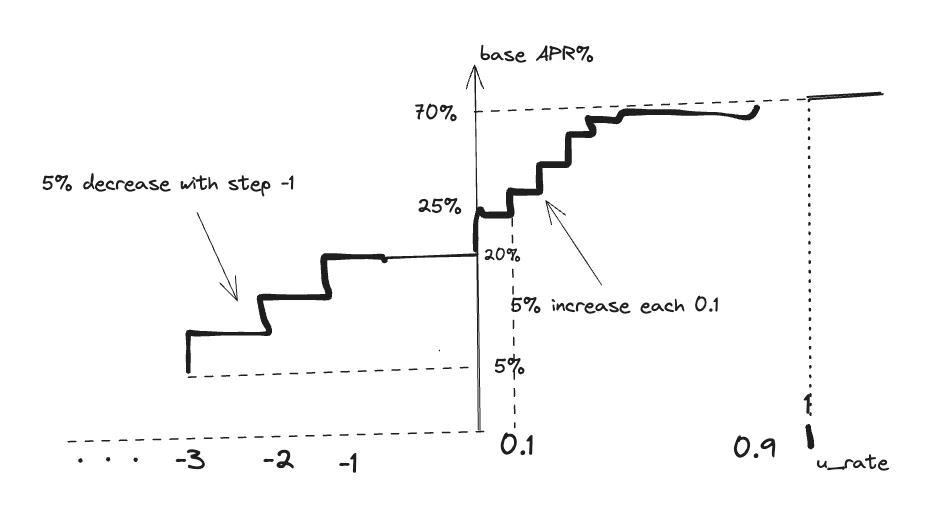

base_apr is projected to be around 20% in the equilibrium state, when the actual worker capacity matches the desired network capacity, set externally. It is increased up to 70% to incentivize more workers to join the network until the target capacity is reached:

APR_CAP cut-off is added to cap the total rewards per epoch.

One defines first

Worker reward rate

For each epoch,rAPR is calculated, and the total of

rAPR/365 * (bond + staked) * EPOCH_LENGTH. It is split into the worker liveness reward and the worker traffic reward.

Let S[i] be the stake for i-th worker and T[i] be the traffic units (defined below) processed by the worker. We define the relative weights as

s[i] and t[i] correspond to the contribution of the i-th worker to the total stake and to total traffic, respectively.

The traffic weight t[i] is a geometric average of the normalized scanned (t_scanned[i]) and the egress (t_e[i]) traffic processed by the worker. It is calculated by aggregating the logs of the queries processed by the worker during the epoch, and for each processed query, the worker reports the response size (egress) and the number of scanned data chunks.

The max potential yield for the epoch is given by rAPR described above:

r[i] for the i-th worker is discounted:

D_traffic is a Cobb-Douglas type discount factor defined as

- Always in the interval

[0, 1] - Goes to zero as

t_igoes to zero - Neutral (i.e., close to 1) when

s_i ~ t_i, that is, the stake contribution is fair (proportional to the traffic contribution) - Excess traffic contributes only sub-linearly to the reward

D_liveness is a liveness factor calculated as the percentage of the time the worker is self-reported as online. A worker sends a ping message every 10 seconds, and if there are no pings within a minute, the worker is deemed offline for this period of time. The liveness factor is the percentage of the time (with minute-based granularity) the network is live. We suggest a piecewise linear function with the following properties:

- It is 0 below a reasonably high threshold (set to 0.8)

- Sharply increases to near 1 in the “intermediary” regime 0.8-0.9

- The penalty around 1 is diminishing

D_tenure is a long-range liveness factor incentivizing consistent liveness across the epochs. The rationale is that

- The probability of a worker failure decreases with the time the worker is live thus freshly spawned workers are rewarded less

- The discount for freshly spawned workers discourages the churn among workers and incentivizes longer-term commitments

Distribution between the worker and delegators

The total claimable reward for thei-th worker and the stakers is calculated simply as r[i] * s[i]. Clearly, s[i] is the sum of the (fixed) bond b[i] and the (variable) delegated stake d[i]. Thus, the delegator rewards account for r[i] * d[i]. This extra reward part is split between the worker and the delegators:

- The worker gets:

r[i] * b[i] + 0.5 * r[i] * s[i] - The delegators get

0.5 * r[i] * s[i], effectively attaining0.5 * r[i]as the effectual reward rate.

- Make the worker accountable for

r[i] - Incentivize the worker to attract stakers (the additional reward part)

- Incentivize the stakers to stake for a worker with high liveness (and, in general, high

r[i])

References

[1] SuperDAO Growth Trends report

[2] Based on the estimate that read-only RPC queries constitute roughly 90% of the RPC provider traffic